SHARE:

Table of Contents

Humanoid robots—machines built to walk, sense, manipulate objects, and interact with humans—represent the most ambitious class of modern robotics.

Their ability to navigate complex environments, perform coordinated movements, and make real-time decisions raises a central question: how do humanoid robots work?

Understanding this requires looking inside the robot’s mechanical structure, sensors, AI systems, learning algorithms, and power architecture.

This article breaks down each core subsystem to explain how humanoid robots work from an engineering and executive perspective.

What Makes Humanoid Robots Unique?

Unlike traditional industrial robots designed for fixed, controlled environments, humanoid robots are built to perform tasks in spaces created for people—factories, hospitals, offices, and eventually homes.

Their human-like morphology (head, torso, arms, hands, and legs) enables compatibility with existing tools and workflows.

Robots such as Tesla Optimus, Boston Dynamics Atlas, and Agility Digit demonstrate that the question “how do humanoid robots work?” is fundamentally about integrating mechanical, computational, and AI subsystems into a cohesive, adaptive machine.

Their operation depends on real-time coordination between sensing, planning, and control—far beyond what traditional robotic arms or wheeled platforms require.

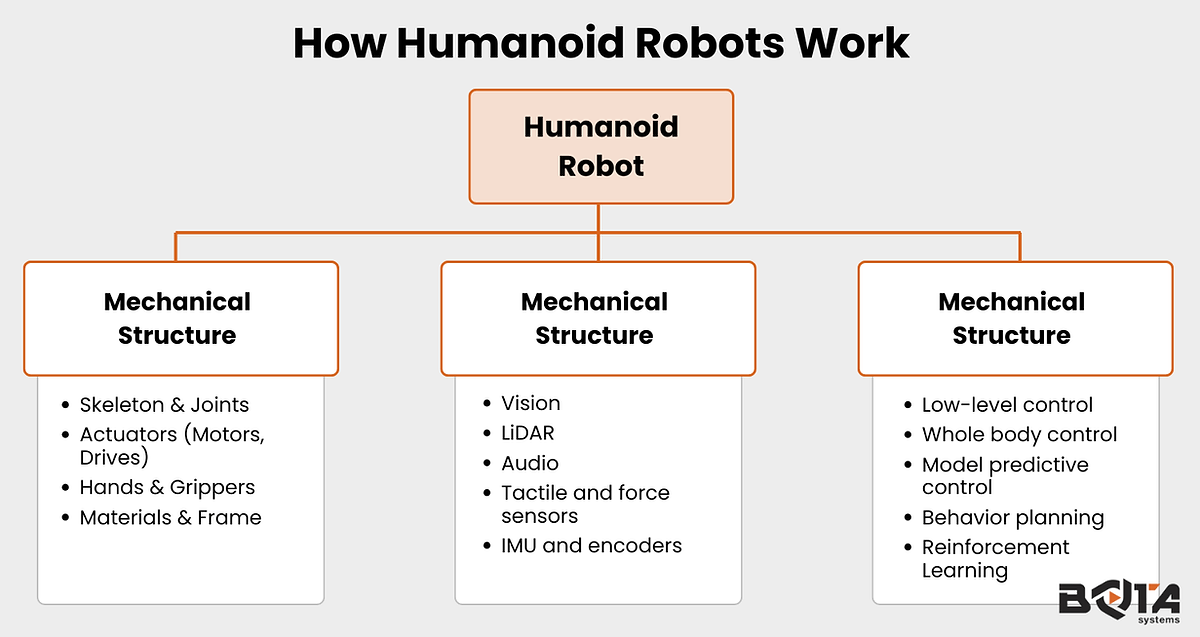

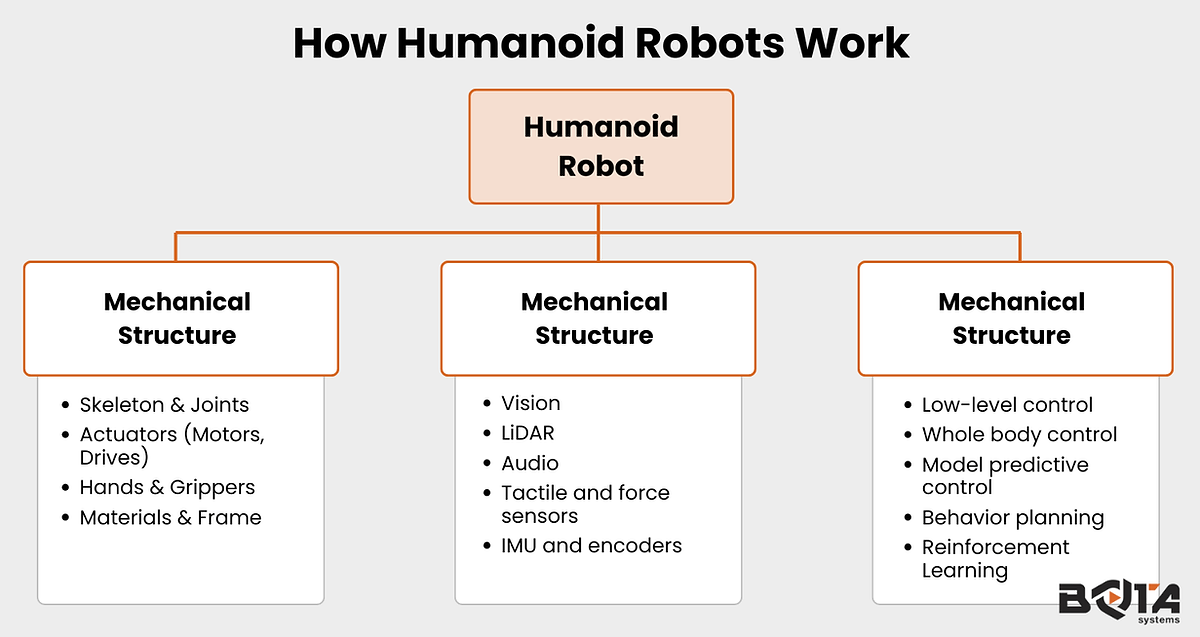

Core Components: The Internal Architecture of Humanoid Robots

Mechanical Structure

The mechanical foundation of a humanoid robot is a multi–degree-of-freedom articulated kinematic chain, typically containing 20–40 active joints. Each joint—hips, knees, ankles, shoulders, elbows, wrists, and neck—uses revolute or spherical configurations driven by electric or hydraulic actuators.

Precision gear trains such as harmonic drives, planetary gears, or tendon-based transmissions convert motor torque into smooth, human-like motion.

The load-bearing chassis uses aluminum, titanium, or high-strength steel frames, often reinforced with carbon-fiber or polymer composites to reduce weight.

Structural components are validated using finite element analysis (FEA) to ensure they withstand dynamic loads such as impacts, rapid deceleration, and single-leg stance during walking.

To support stable locomotion, engineers carefully optimize mass distribution to maintain the center of mass (CoM) within controllable limits—an essential requirement for gait generation and disturbance rejection.

This mechanical architecture is the physical backbone of how humanoid robots work.

Sensors: How Robots Perceive Their Environment

Perception systems are central to understanding how humanoid robots work because they allow robots to build a real-time model of the world.

Humanoids integrate multiple sensing modalities:

Vision Systems

RGB or stereo cameras

Depth sensors (ToF, structured light)

Compact LiDAR units

These inputs feed into SLAM, visual odometry, and 3D mapping, enabling the robot to localize itself, detect obstacles, and interpret human gestures and tasks.

Auditory Systems

Microphone arrays

Beamforming and sound-source localization

Speech recognition models

These allow humanoid robots to respond to verbal commands even in noisy environments.

Proprioceptive Sensors

High-resolution joint encoders

Six-axis force–torque sensors at wrists and ankles

Distributed tactile pads

9-DoF IMUs

Together, these sensors provide a detailed understanding of joint states, contact forces, posture, and balance—essential for coordinated locomotion and manipulation.

Control Algorithms: The Brain Behind Movement

To understand how humanoid robots work, one must understand the control stack.

Control algorithms govern every aspect of humanoid robot motion, from maintaining balance to executing complex whole-body tasks. At the lowest level, PID controllers regulate joint torques and positions, ensuring stable and responsive actuation.

Above this layer, model-based approaches such as inverse kinematics and inverse dynamics compute the required forces and joint trajectories for coordinated arm and leg movements.

More advanced strategies—Model Predictive Control (MPC) and Whole-Body Control (WBC)—optimize motion in real time by considering constraints like joint limits, ground contact forces, and center-of-mass stability. These algorithms enable robots to handle disturbances, climb stairs, or recover from pushes without losing balance.

Machine learning, particularly reinforcement learning, is increasingly used to generate adaptive gait patterns and autonomous manipulation policies.

Learned models augment physics-based controllers by improving efficiency and robustness in unstructured environments. Together, these layered control systems provide the precision, stability, and adaptability required for human-like motion.

AI and Decision-Making: How Robots Interpret and Act

Artificial intelligence is the cognitive engine behind how humanoid robots work at high levels.

Perception models process camera feeds, LiDAR maps, audio signals, and tactile data to identify objects, recognize human gestures, interpret speech, and estimate environmental context.

This information flows into high-level planning modules that determine the robot’s next action, such as selecting a grasp strategy, navigating around obstacles, or coordinating arm–leg motions during a task. Techniques like behavior trees, task planners, and semantic mapping ensure decisions are structured, traceable, and safe.

Motion plans generated by AI are executed through physics-based controllers, allowing the robot to interact smoothly with human environments.

Reinforcement learning is increasingly used to refine behaviors such as grasping, balancing, and adaptive locomotion—enabling robots to improve performance based on experience.

Combined, perception AI, decision logic, and learning frameworks provide the autonomy and intelligence needed for reliable, human-aware operation.

How Do Humanoid Robots Walk? The Science of Bipedal Locomotion

Walking is one of the hardest tasks in robotics. Understanding how humanoid robots walk is essential to understanding how humanoid robots work.

Humanoid robots maintain stability using:

Zero Moment Point (ZMP) monitoring

Center-of-mass (CoM) regulation

Ground reaction force estimation

Real-time gait optimization

Gait generation algorithms compute:

Step length

Swing trajectories

Timing adjustments

Terrain adaptation

Advanced humanoids employ MPC and WBC to modulate momentum and redistribute weight, enabling tasks such as stair climbing, running, and push recovery.

Reinforcement learning further improves robustness, allowing robots to adapt to slippery floors, unexpected obstacles, and uneven terrain—situations where classical control alone struggles.

This holistic integration of control, sensing, and learning forms the core of how humanoid robots walk and maintain balance.

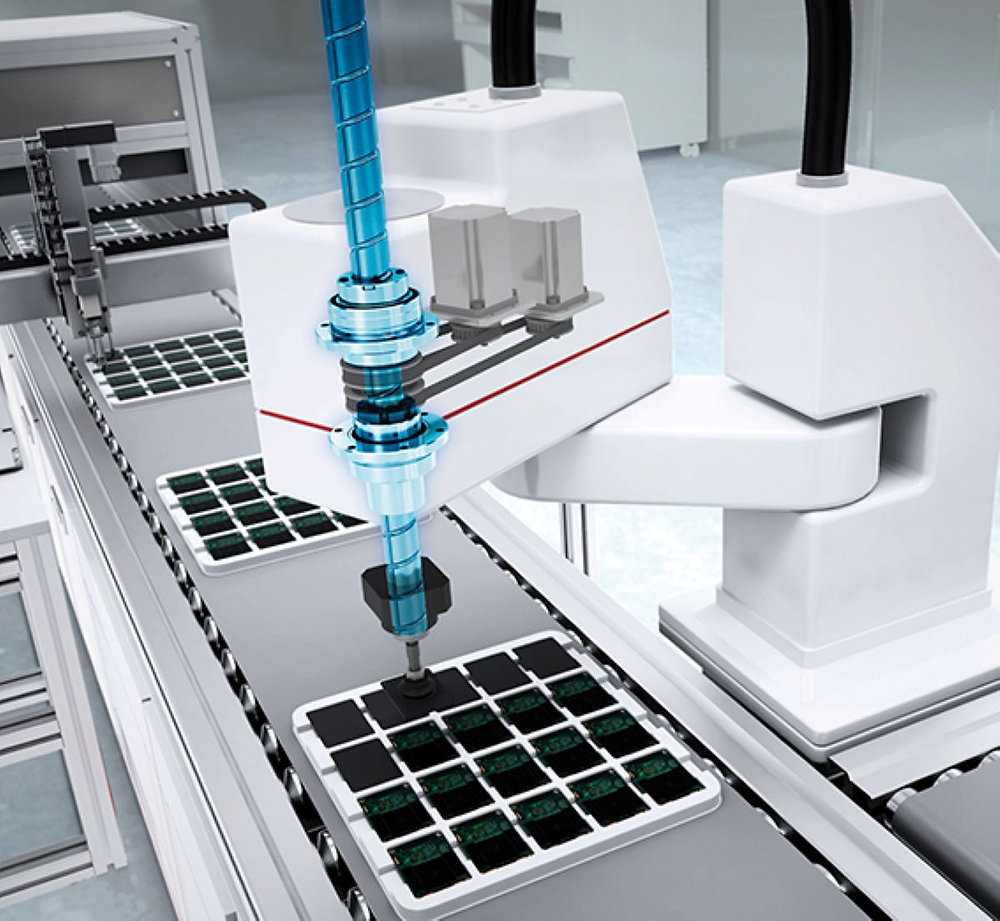

Manipulation: How Robots Use Their Arms and Hands

Manipulation showcases another dimension of how humanoid robots work. It relies on coordinated arm kinematics, precision sensing, and real-time force control to handle objects safely and accurately.

Each arm is modeled as a multi-DoF kinematic chain, with actuators driving shoulder, elbow, and wrist joints to achieve human-range reach and dexterity.

High-resolution encoders and torque sensors provide continuous feedback on joint position and load, enabling stable trajectories during both free-space motion and contact-rich tasks.

The hands—often equipped with multi-fingered, tendon-driven or direct-drive mechanisms—use tactile arrays and force-torque sensors to measure grip pressure, shear forces, and object shape.

This allows fine manipulation such as rotating tools, picking irregular objects, or performing two-handed tasks requiring coordinated force distribution. Control algorithms integrate inverse kinematics, impedance control, and grasp planners to ensure compliant interaction with unknown items.

Increasingly, learning-based policies refine grasp quality and adapt manipulation strategies to new environments, allowing humanoids to handle tasks ranging from warehouse picking to delicate assembly operations.

Power Systems: What Keeps Humanoid Robots Running

Humanoid robots typically use high-density lithium-ion battery packs with integrated battery-management systems for thermal regulation, cell balancing, and safety.

Regenerative braking, efficient motor drivers, and distributed DC power buses reduce energy losses, while onboard power electronics regulate voltages for actuators, sensors, and computing modules.

How Humanoid Robots Learn: Training AI Systems

Learning plays a major role in modern humanoid robotics. Robots learn through:

Supervised learning for perception

Reinforcement learning for locomotion and manipulation

Self-supervised learning for continual improvement

Simulation-to-real transfer using platforms like MuJoCo or Isaac Gym

Vision and perception models are trained on large annotated datasets to recognize objects, scenes, and human actions.

Reinforcement learning enables robots to optimize behaviors—such as balancing, grasping, or navigating—by receiving rewards for successful actions. High-fidelity physics simulators like MuJoCo or Isaac Gym accelerate learning by allowing millions of trials without hardware wear.

These pipelines allow robots to acquire new skills without constant human engineering, accelerating progress in how humanoid robots work and adapt.

How Humanoid Robots Work Together

Understanding what humanoid robots can work together involves examining how multi-agent coordination, shared mapping, and AI-driven decision frameworks allow multiple humanoids to operate as a unified system.

When equipped with synchronized perception and communication channels, humanoid robots can exchange 3D environment maps, allocate tasks dynamically, and adjust their movements to avoid interfering with one another.

Multi-agent reinforcement learning enables groups of humanoids to learn cooperative behaviors such as lifting large objects, performing assembly tasks, or coordinating motion in tight spaces.

By sharing force, motion, and intent data in real time, teams of humanoids achieve greater efficiency and robustness than any single robot alone.

This capability will be essential for future applications in warehouses, construction sites, and service environments where collaborative autonomy is required.

Challenges in Making Humanoid Robots Practical

Despite rapid progress, challenges remain:

Maintaining stability in unstructured environments

Power density limits and thermal management

Actuator torque, durability, and energy efficiency trade-offs

Perception failures in low-light or cluttered environments

High computational load for real-time whole-body control

Safety certification for human–robot interaction

High manufacturing and maintenance costs

Overcoming these challenges is essential for scaling humanoid robots to industrial and commercial use.

Frequently Asked Questions

1. How do humanoid robots work in real environments?

They combine sensor fusion, AI planning, and real-time control to perceive surroundings, make decisions, and execute coordinated whole-body movements.

2. How do humanoid robots walk without falling?

Robots maintain balance through ZMP control, CoM regulation, and MPC-driven gait adjustments.

3. Can humanoid robots work together?

Yes. Multi-robot coordination uses shared mapping, wireless communication, and multi-agent AI to synchronize tasks.

4. What limits humanoid robots today?

Battery capacity, actuator performance, perception failures, and high cost are the primary constraints.

5. Will humanoid robots become common?

As power, control, and AI systems improve, humanoids will increasingly appear in logistics, manufacturing, healthcare, and home assistance.

Integrating High-Precision Force Sensing into Humanoid Robots

Bota Systems’ high-precision, lightweight 6-axis force-torque sensors play a critical role in advancing humanoid robotics by providing the detailed contact awareness required for safe locomotion, compliant manipulation, and human–robot interaction.

When integrated at the wrists, ankles, or fingertips, force–torque sensors provide real-time measurements of contact forces and moments, improving grasp stability, slip detection, gait robustness on uneven terrain, and rapid response to unexpected collisions—capabilities that vision systems and joint encoders alone cannot deliver.

Bota Systems’ lightweight, fully integrated force–torque sensors are designed to meet these requirements while minimizing added mass, cabling, and integration complexity, making them well suited for humanoid platforms where balance, agility, and dynamic performance are tightly coupled to sensor placement and weight distribution.

Conclusion

Understanding how do humanoid robots work requires examining mechanical engineering, sensing, control theory, AI, and learning algorithms as a unified system.

As these technologies continue evolving, humanoid robots are positioned to become one of the most impactful automation platforms of the next decade.

author