SHARE:

Table of Contents

Generative AI in robotics is redefining what robots can do—not just by following instructions, but by learning from experience. As automation moves beyond structured factory floors into dynamic, contact-rich environments, intelligence alone is no longer enough. Self-learning robots must be able to sense, predict, and adapt in real time.

This is where generative AI marks a fundamental shift. By enabling robots to model the physical world, simulate actions, and refine behaviour through experience, it unlocks unprecedented autonomy. Yet the success of these models depends on one critical factor: high-fidelity force and torque sensing. Without precise physical feedback, even the most advanced AI remains disconnected from reality.

What Does Generative AI Mean for Robotics?

Traditional robotic intelligence is largely reactive. A perception system observes the environment, a controller selects an action, and feedback is used to correct errors. While effective in structured settings, this approach struggles when robots operate in unstructured, contact-rich environments such as manipulation, human interaction, or adaptive assembly.

Generative AI in robotics introduces a crucial new capability: the ability to model the world and imagine future outcomes. Imagine a robot picking up an object it has never seen before. Instead of relying on a fixed rule, it predicts how the object might respond, adjusts its motion as contact forces change, and learns from the outcome.

This ability to anticipate, feel, and adapt is what generative AI brings to modern robotics. These models learn underlying patterns in data and use them to:

Predict future states of the environment

Generate robot motions and trajectories

Simulate physical interactions

Adapt control strategies in real time

This allows robots to plan by prediction, not just reaction. In practice, this means a robot can internally simulate multiple action sequences, evaluate their consequences, and select behaviours that are safer, more efficient, or more successful—before physically executing them.

Model-based reinforcement learning studies have shown that robots using learned generative dynamics can solve manipulation and locomotion tasks with far fewer real-world trials, significantly reducing training time and hardware wear.

The Self-Learning Loop in Generative Robotics

To truly understand self-learning robots, it helps to view them as closed-loop learning systems rather than static machines.

A typical generative self-learning loop looks like this:

Sense: The robot collects multimodal data: vision, proprioception, force, torque, and sometimes tactile signals.

Encode: Raw sensor data is compressed into a latent representation that captures the robot’s belief about the current physical state.

Generate (Predict): A generative model predicts multiple possible future states resulting from different actions.

Evaluate: The robot assesses predicted outcomes based on task objectives, safety constraints, and uncertainty.

Act: The selected action is executed in the real world.

Learn: The difference between predicted and actual outcomes updates the generative model.

Generative AI in robotics operates at the prediction and imagination stage, enabling the robot to learn not just from success or failure, but from anticipated futures.

From Data to Embodied Intelligence

Many misconceptions arise because people equate generative AI in robotics with generative AI in software domains. The difference is embodiment.

Robots do not learn from text or images alone—they learn through physical interaction.

Embodied learning means:

Learning how objects resist motion

Understanding friction, compliance, and deformation

Adapting to uncertainty in contact

A robot lifting a cup does not merely recognize the cup visually; it learns:

How heavy it is

Whether it is slipping

How much force is safe

Generative AI enables robots to internalize these physical relationships by learning probabilistic models of interaction.

Without embodiment, AI can describe the world; with embodiment, it can operate within it.

Generative AI Needs Physical Grounding

In robotics, generative AI must be anchored to real-world interaction to be effective. Physical grounding refers to the process by which generative models align their internal predictions with measurable physical quantities such as force, torque, and proprioceptive feedback.

Unlike purely data-driven AI, robotic generative models are continuously corrected through sensorimotor interaction, allowing them to account for contact dynamics, compliance, and actuation uncertainty.

Key Physical Signals for Self-Learning Robots

Contact forces during grasping

Torque during insertion or assembly

Compliance during human interaction

Micro-vibrations and slip detection

High-precision force–torque sensors enable robots to close the loop between generative predictions and physical execution—turning AI models into reliable real-world systems.

Recent surveys on diffusion models for robotic manipulation emphasize that generative policies require tight sensorimotor grounding to succeed in real-world settings. Force, torque, and tactile feedback are essential for correcting predictions, resolving contact uncertainty, and enforcing safety during execution. This reinforces the need for high-fidelity physical sensing in generative, self-learning robotic systems.

Representative Generative Models Used in Self-Learning Robotics

In self-learning robots, generative intelligence is realized through a small set of model architectures that support prediction, uncertainty handling, and adaptive control. The following examples illustrate the most commonly used generative models in modern robotic learning systems.

World Models (Latent Dynamics Models)

Learn compact latent representations of robot state and environment dynamics, enabling prediction of future physical states under candidate actions. These models support planning by allowing robots to internally simulate interaction outcomes before execution.

Diffusion Models for Action and Motion Generation

Diffusion-based policies have emerged as a powerful generative approach for robot control, producing smooth and physically feasible action trajectories by iteratively refining sampled motions.

Unlike deterministic controllers, these models naturally represent uncertainty and multimodal outcomes, making them well suited for contact-rich manipulation. When combined with force–torque feedback, diffusion policies can adapt generated motions in real time to align with physical constraints.

Variational Autoencoders (VAEs) for Sensorimotor Representation

Compress high-dimensional sensory and interaction data into structured latent spaces while preserving uncertainty. VAEs often serve as foundational components within larger generative control and planning frameworks.

Generative AI Applications in Robotics

Intelligent Manipulation

Generative models allow robots to synthesize grasp strategies for previously unseen objects. With force-torque feedback, robots can adjust grip strength dynamically, avoiding damage or slippage.

Adaptive Assembly and Manufacturing

In flexible manufacturing, robots must handle parts with varying tolerances. Generative AI in robotics enables robots to learn optimal insertion forces, while force sensors validate and refine these learned strategies in real time.

Human-Robot Collaboration

Safe collaboration requires robots to “feel” human contact. Generative AI predicts interaction scenarios, while force-torque sensing ensures immediate, compliant responses.

Medical and Precision Robotics

In surgical and laboratory automation, generative models simulate delicate procedures. Force-torque sensing ensures that generated motions remain within safe biomechanical limits.

Why Force-Torque Sensing Is a Strategic Advantage

Despite rapid progress, generative AI for robotics still faces several critical challenges. Generated actions can be unpredictable, raising safety concerns in real-world, contact-rich environments, while the complexity of learned policies makes explainability and validation difficult.

In addition, training these systems often requires large numbers of real-world trials, increasing cost, time, and wear on robotic hardware. High-accuracy force-torque sensing plays a key role in addressing these limitations by providing reliable physical feedback for enforcing safety constraints, improving the interpretability of contact-driven behaviors, and enabling faster learning convergence with significantly fewer trials

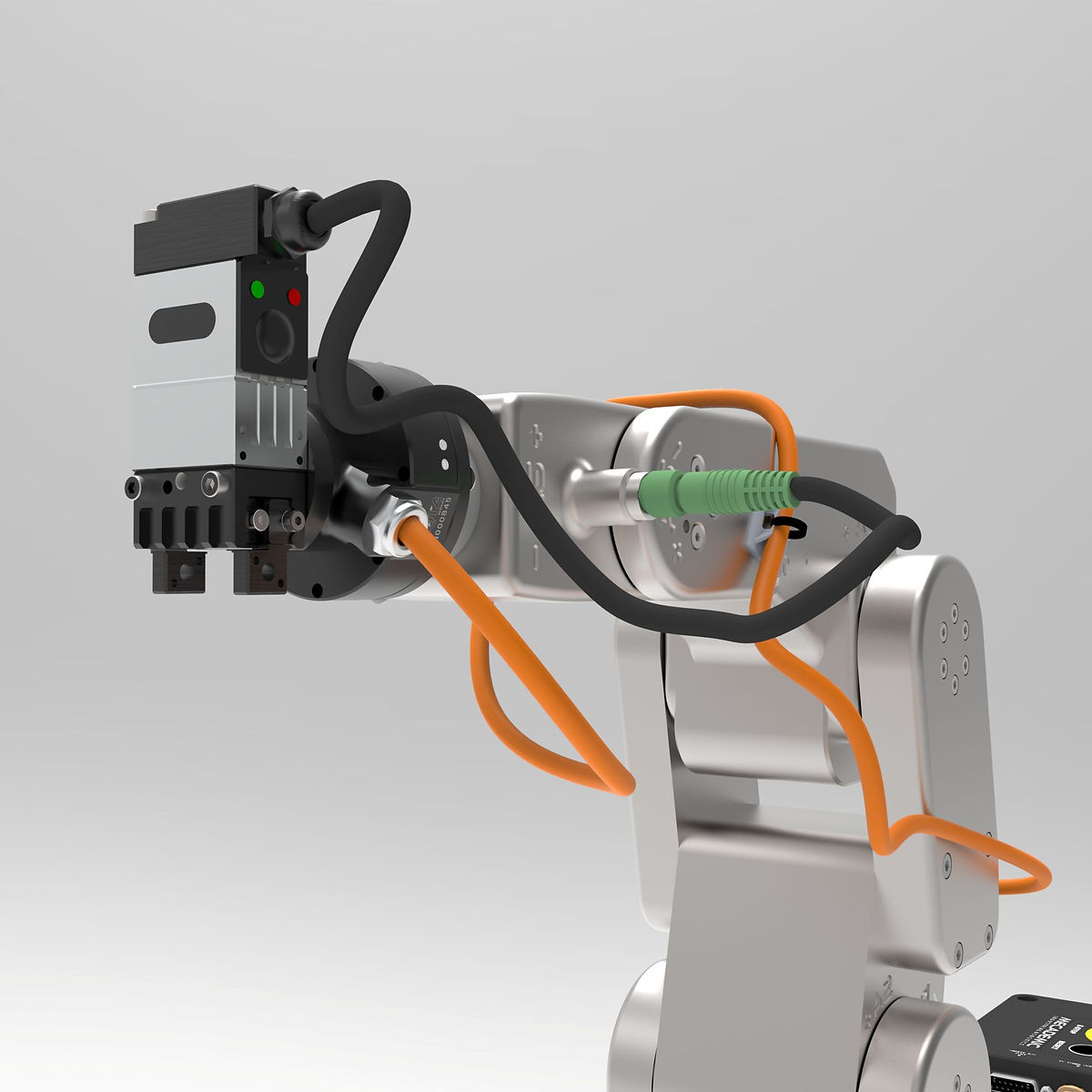

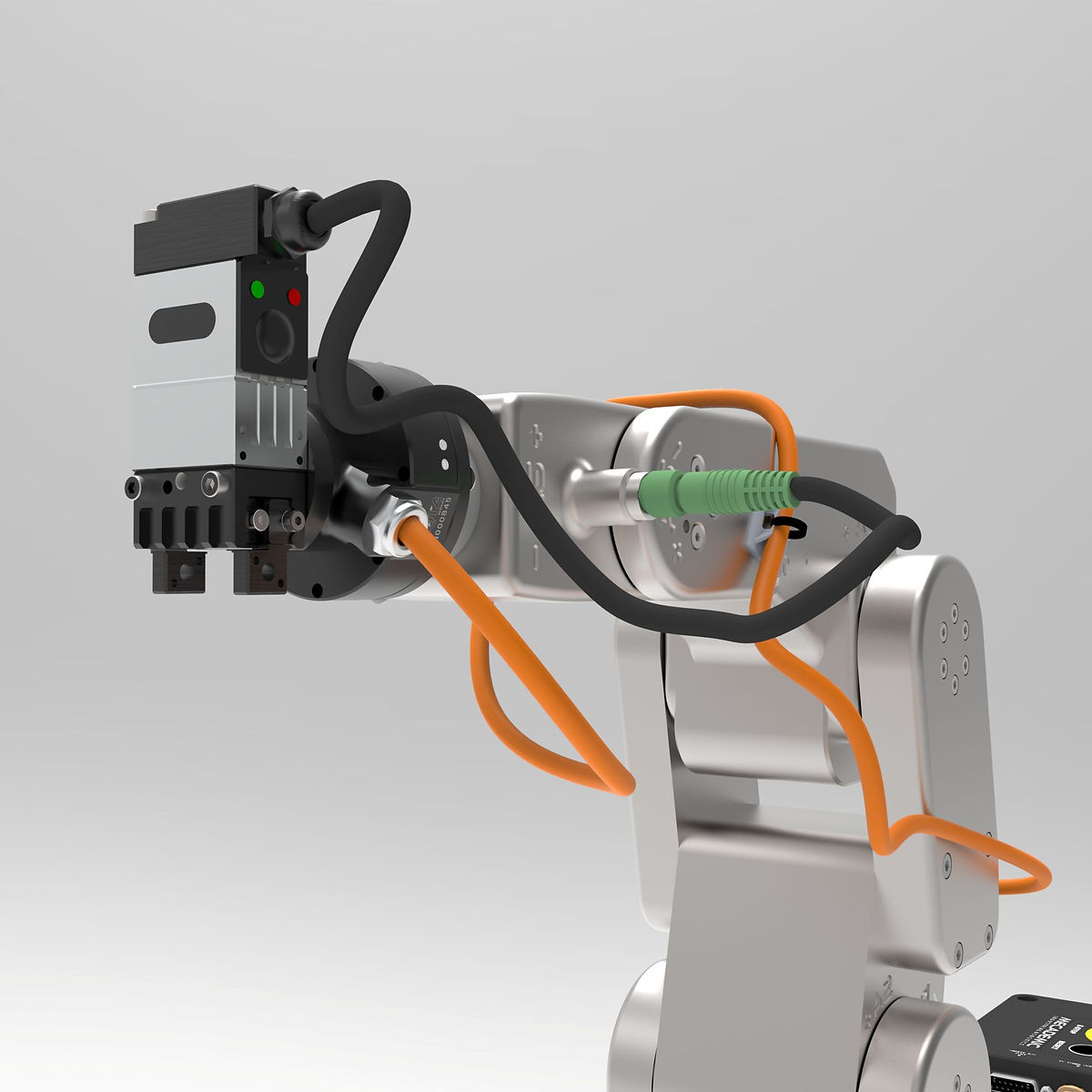

For companies developing next-generation robots, force-torque sensing is no longer optional—it is foundational. Bota Systems designs high-performance force-torque sensors that directly support the needs of generative AI robotics, including:

High bandwidth for dynamic interactions

Low noise for learning-based control

Compact integration for robot wrists and end-effectors

Accurate multi-axis force and torque measurement

These characteristics are critical for training generative models that depend on subtle physical cues to refine behavior.

The Future: Smarter Robots, Better Sensing

The future of generative AI in robotics points toward:

Foundation models shared across robot platforms

Self-learning manipulation skills transferable between tasks

Robots that reason, adapt, and collaborate naturally

As AI models grow more capable, the quality of physical sensing will increasingly define real-world performance. Companies that invest in precision force-torque sensing will be best positioned to unlock the full potential of generative AI robotics.

Conclusion

Generative AI in robotics is transforming robots from rigid machines into adaptive, self-learning systems. But intelligence alone is not enough. To operate safely and effectively in the real world, robots must feel as well as think.

By pairing generative AI with high-performance force-torque sensing, innovators can build robots that learn faster, act safer, and collaborate more naturally with humans. This convergence defines the next era of intelligent robotics—and it is where sensing leaders like Bota Systems play a pivotal role.

author