SHARE:

Table of Contents

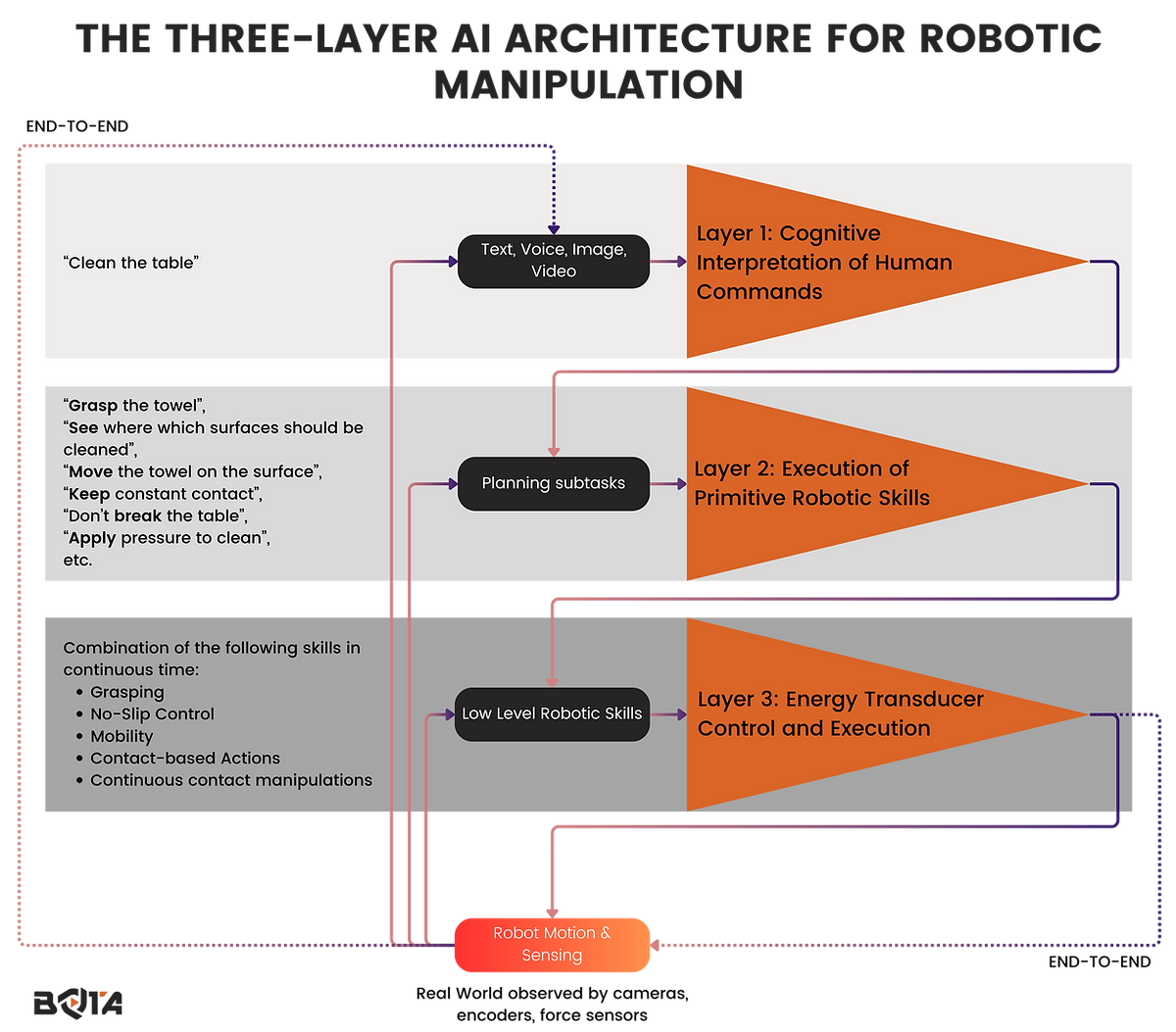

Robotic control systems are built on complex layers of artificial intelligence (AI) that allow robots to perceive, interpret, and execute commands. While simple locomotion commands, such as “go from point A to point B,” are relatively straightforward, behavioral cloning for locomotion is significantly more challenging. Although video-based imitation learning has been explored, reinforcement learning remains the dominant approach. In contrast, robotic manipulation tasks—such as “Pick up the spoon and stir the tea” or “Pick up the towel and clean the table”—involve a more intricate AI hierarchy. This article explores the three-layer AI structure that enables robots to process natural language commands and execute manipulation tasks with precision.

Layer 1: Cognitive Interpretation of Human Commands

The first layer of AI is responsible for understanding human language. It interprets high-level, abstract commands that humans issue, which often involve sensory perceptions and intuitive understanding. This layer faces significant challenges because human instructions are not always explicit or precise. For example, a command like “clean the table” can have multiple interpretations depending on context, surface material, and the type of debris present. The AI must break down the instruction into actionable robotic sub-tasks, considering object recognition, material properties, and environmental constraints.

Natural Language Processing (NLP) techniques, combined with knowledge graphs and contextual embeddings, are often employed in this layer.

Limitations

However, due to the inherent ambiguity in human language, errors can arise, leading to unintended behaviors. Research in grounding language to robotic perception (Tellex et al., 2011) and hierarchical task decomposition (Andreas et al., 2017) continues to improve AI’s ability to interpret commands reliably.

Layer 2: Execution of Primitive Robotic Skills

Once the cognitive AI layer translates human commands into robotic instructions, the second layer ensures their execution through fundamental robotic skills. These primitive skills resemble the muscle memory and sensorimotor patterns that humans develop from infancy. The most common robotic primitive skills include:

Grasping: Securely holding objects with appropriate force.

No-slip Control: Ensuring objects do not slip from the gripper.

Mobility: Adjusting positions while maintaining balance.

Contact-based Actions: Making and maintaining controlled contact with objects.

Continuous Contact Manipulation: Moving objects while maintaining consistent force application.

These skills must be executed with near-perfect accuracy, as any deviation can result in failure. Unlike the first layer, where some level of uncertainty is tolerated, this layer must function with minimal error.

Limitations

The constraints here are primarily dictated by the robot’s kinematics, dynamics, and morphology, including payload limitations, speed constraints, and joint angle restrictions.

Advancements in reinforcement learning (Levine et al., 2016) and imitation learning (Argall et al., 2009) have significantly improved robots’ ability to learn and refine these fundamental skills. Moreover, real-time sensor feedback, such as haptic sensing and force-torque measurements, plays a crucial role in enhancing precision.

Layer 3: Energy Transducer Control and Execution

The third and final layer translates robotic skills into machine-executable signals for energy transducers—actuators and sensors. This layer governs motor control, force application, and sensory feedback processing.

Actuators: Convert electrical or hydraulic energy into motion.

Sensors: Measure forces, torques, positions, and environmental parameters to ensure precise execution.

Safety Mechanisms: Enforce fail-safes to prevent unintended actions that could lead to damage or injury.

Unlike the first two layers, this level must operate with 100% reliability.

Limitations

The limiting factors include actuator and sensor resolution, bandwidth, response time, and range. If the robot’s hardware cannot execute the motion accurately, even a perfectly planned sequence from the second layer will fail. Hardware constraints and physics-based modeling (Siciliano & Khatib, 2016) ensure that this layer operates within safe and predictable limits.

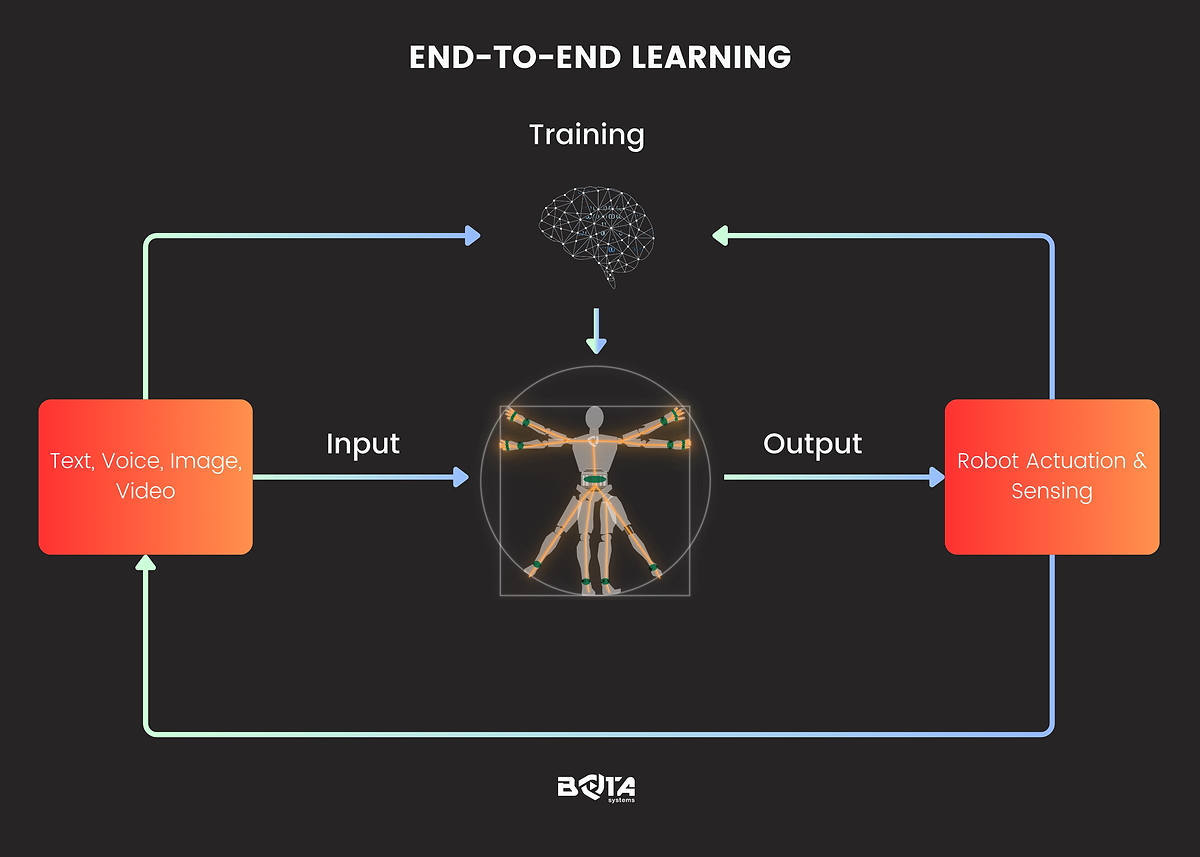

End-to-End Learning vs. Layered AI Approaches

One alternative to this three-layer approach is end-to-end policy learning, where AI directly translates human natural language into actuator commands. While end-to-end learning can be efficient and bypass intermediate computations, it suffers from generalization issues. Training a robot to handle every possible scenario is nearly impossible due to the vast range of environmental conditions and task variations. Instead, a hierarchical, structured approach—where cognitive understanding, skill execution, and actuator control are separated—ensures adaptability, robustness, and safety.

Conclusion

The three-layer AI structure provides a logical and effective way to handle robotic locomotion and manipulation tasks. By breaking down complex commands into manageable levels, robots can execute actions reliably while ensuring safety and precision. Future research will continue to refine these layers, integrating improved NLP, reinforcement learning, and adaptive control mechanisms to enhance robotic autonomy and human-robot collaboration.

References

Andreas, J., Klein, D., & Levine, S. (2017). “Modular multitask reinforcement learning with policy sketches.” International Conference on Machine Learning (ICML).

Argall, B. D., Chernova, S., Veloso, M., & Browning, B. (2009). “A survey of robot learning from demonstration.” Robotics and Autonomous Systems, 57(5), 469-483.

Levine, S., Finn, C., Darrell, T., & Abbeel, P. (2016). “End-to-end training of deep visuomotor policies.” Journal of Machine Learning Research, 17(1), 1334-1373.

Siciliano, B., & Khatib, O. (Eds.). (2016). Springer Handbook of Robotics. Springer.

Tellex, S., Thaker, P., Deits, R., Shaw, G., Roy, N., & Teller, S. (2011). “Understanding natural language commands for robotic navigation and mobile manipulation.” AAAI Conference on Artificial Intelligence.

author