SHARE:

Table of Contents

Artificial intelligence is rapidly moving beyond the digital world. While most AI systems still analyze data in the cloud, a new generation of intelligent machines is beginning to perceive, decide, and act in real-world environments. This shift is known as physical AI.

From adaptive industrial robots to autonomous vehicles and collaborative machines, physical AI combines advanced sensing, machine learning, and real-time control to enable systems that can interact safely and intelligently with the physical world.

Understanding what physical AI is – and why it matters – is becoming essential for engineers, manufacturers, and technology leaders preparing for the next wave of intelligent automation.

What Is Physical AI?

Physical AI refers to artificial intelligence systems that operate in and interact with the physical world through sensors, actuators, and mechanical systems.

Unlike traditional AI, which processes digital information (text, images, data), physical AI systems:

- Perceive real-world environments

- Make decisions in real time

- Control physical movements

- Adapt based on physical feedback

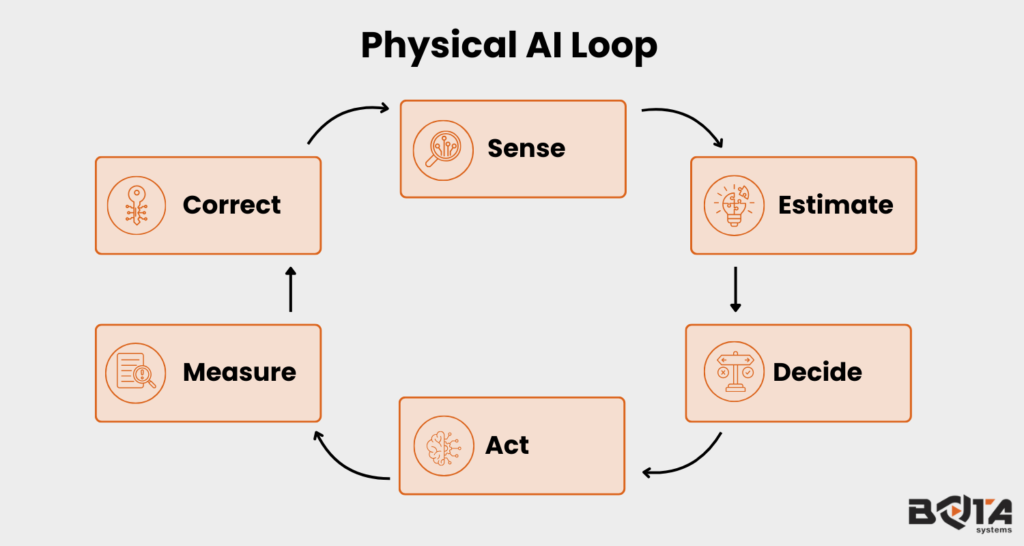

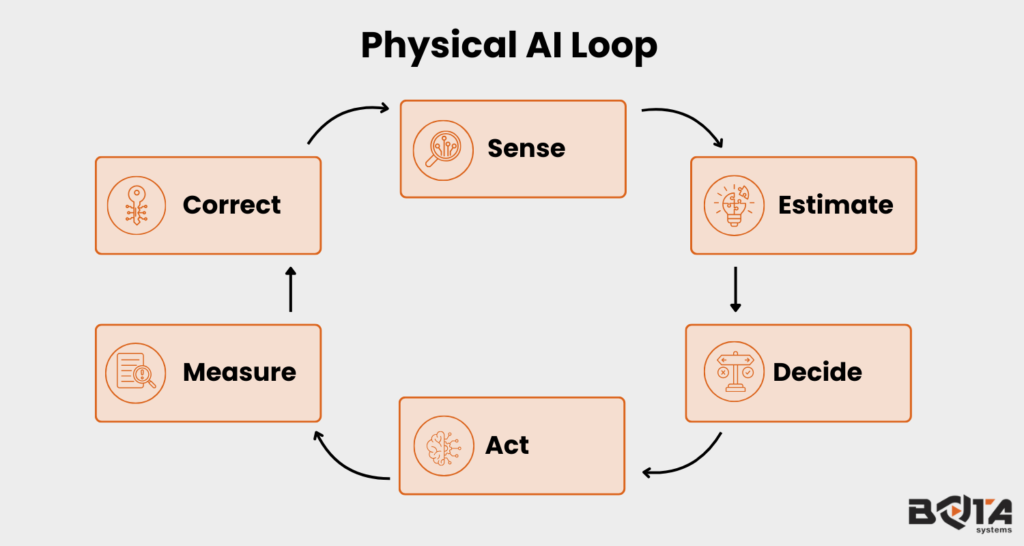

In simple terms: Physical AI is AI that can sense, think, and act in the real world.

If generative AI writes content, physical AI moves a robotic arm. If machine learning detects patterns in data, physical AI adjusts grip force during assembly.

From an engineering standpoint, the physical AI definition can be described as:

“A closed-loop intelligent control system that integrates perception, decision-making, and physical actuation to autonomously perform tasks in dynamic environments.”

A physical AI system typically includes:

- Sensors – Cameras, LiDAR, force-torque sensors, IMUs

- AI Models – Machine learning, deep learning, reinforcement learning

- Control Systems – Motion planning, trajectory control, feedback control

- Actuators – Motors, grippers, robotic joints

- Feedback Loops – Continuous adjustment based on physical measurements

The key difference from software AI is embodiment — physical AI exists in a body.

From a control and systems perspective, physical AI can be modelled as a cyber-physical closed-loop architecture in which perception modules estimate system states from multimodal sensor data, learning algorithms generate adaptive policies, and feedback controllers execute real-time actuation under dynamic constraints.

Unlike purely data-driven AI, physical AI must account for nonlinear dynamics, contact mechanics, uncertainty, latency, and safety bounds, ensuring stability and robustness while interacting with unpredictable environments.

How Physical AI Differs from Traditional AI

| Traditional AI | Physical AI |

|---|---|

| Operates in digital environments | Operates in physical environments |

| Outputs predictions or text | Outputs movement and force |

| Evaluated on accuracy | Evaluated on safety, precision, and robustness |

| No physical consequences | Real-world physical impact |

For example:

- A chatbot giving a wrong answer is inconvenient.

- A robot applying excessive force during surgery is dangerous.

That is why physical AI robotics requires reliable sensing and precise control.

Why Physical AI Is Becoming Important

Advances in deep learning, real-time edge computing, high-resolution sensing, and more affordable robotic hardware are accelerating physical AI adoption. At the same time, industries face labor shortages, rising quality standards, and growing demand for flexible automation.

Modern manufacturing and logistics environments are highly variable. Companies no longer seek robots limited to fixed, pre-programmed motions. They require systems that can:

- Adapt to uncertainty

- Manipulate variable objects

- Collaborate safely with humans

- Learn through physical interaction

Physical AI enables this shift from rigid automation to intelligent, responsive systems capable of operating in dynamic real-world environments.

Systems designed for this transition increasingly rely on high-fidelity force and tactile data collection platforms, such as those developed by Bota Systems, to generate the real-world interaction data required for robust physical AI training.

Core Components of Physical AI Systems

To understand physical AI better, let’s break down its core components.

1. Perception

Perception involves multimodal sensor fusion and state estimation, enabling the system to construct a reliable representation of its environment and internal dynamics. Raw signals from cameras, force-torque sensors, IMUs, LiDAR, and tactile arrays are processed through filtering, feature extraction, and deep learning models to estimate object pose, contact states, and environmental conditions.

Examples include:

- Vision systems detecting object geometry and pose

- Force sensors identifying contact forces and torque vectors

- Tactile sensors detecting slip, pressure distribution, and surface properties

Perception converts high-dimensional sensor data into structured, actionable state information.

2. Decision-Making

Decision-making integrates learning-based inference with motion planning and control policy generation. Based on estimated states, AI models compute optimal actions under physical constraints and task objectives.

This layer may involve:

- Neural networks for pose prediction and grasp selection

- Reinforcement learning for adaptive policy optimization

- Model-based planners generating dynamically feasible trajectories

Unlike purely digital AI, decisions must satisfy stability, safety, and physical feasibility constraints in real time.

3. Actuation

Actuation translates computed control commands into physical motion through motors, joints, and compliant mechanisms.

This layer implements low-level control strategies such as PID, impedance control, admittance control, or model predictive control to ensure precise and stable execution.

Examples include:

- A robotic arm regulating grip force during manipulation

- A drone adjusting thrust for dynamic stabilization

- A mobile robot controlling wheel velocities for obstacle avoidance

Actuation is where intelligence becomes physically embodied.

4. Feedback & Adaptation

Physical AI operates within a closed-loop control framework:

Continuous feedback enables error correction, disturbance rejection, and adaptive behavior in uncertain environments. High-precision force-torque sensing plays a critical role in contact-rich and safety-critical tasks, including:

- Assembly operations

- Surface finishing and polishing

- Surgical manipulation

- Human–robot collaboration

This feedback-driven adaptation distinguishes physical AI from static automation, enabling robust performance in dynamic real-world conditions.

Platforms that combine multi-axis force sensing with haptic teleoperation and kinesthetic teaching — including integrated solutions from Bota Systems — are increasingly used to capture the high-quality manipulation data these applications demand.

Physical AI Examples in the Real World

Let’s explore practical physical AI examples across industries.

1. Industrial Robots with Force Control

Traditional industrial robots follow pre-programmed paths. Physical AI robots adjust force dynamically.

Example:

- A robot assembling delicate electronic components

- Adjusting pressure if alignment changes

- Preventing part damage

These systems rely on real-time force feedback and intelligent control.

2. Autonomous Vehicles

Self-driving cars are major physical AI systems.

They:

- Perceive roads via cameras and LiDAR

- Predict vehicle and pedestrian behavior

- Physically steer, brake, and accelerate

Unlike software AI, mistakes here have physical consequences.

3. Surgical Robotics

Modern surgical systems incorporate AI-driven assistance.

They:

- Detect tissue boundaries

- Adjust tool precision

- Improve motion stability

Precision sensing and intelligent control are critical.

4. Collaborative Robots (Cobots)

Cobots working alongside humans must:

- Detect human presence

- Adjust force when contact occurs

- Ensure safety during interaction

Physical AI enables robots to understand and respond to human touch.

5. Humanoid Robots

Humanoid systems represent advanced physical AI robotics.

They must:

- Balance dynamically

- Manipulate objects

- Walk on uneven terrain

- Adapt grip force

These capabilities require tightly integrated perception, AI models, and physical feedback.

Challenges in Physical AI

While physical AI is redefining intelligent automation, deploying physical AI systems in real-world environments introduces engineering complexities that extend far beyond conventional software-based AI.

Unlike digital models operating in abstract data spaces, physical AI must function within dynamic mechanical systems governed by physics, uncertainty, and strict safety constraints.

1. Safety and Operational Robustness in Physical AI Systems

Safety remains the foremost challenge in physical AI architectures. Because decisions directly translate into force, torque, and motion, any error in perception, policy inference, or control execution can result in mechanical damage or human injury.

Advanced physical AI systems, therefore, require bounded control policies, collision detection frameworks, compliant actuation strategies, and formally stable feedback controllers.

In collaborative environments—particularly in physical AI robotics applications such as cobots and surgical systems—redundancy, fault detection, and real-time safe-state recovery mechanisms are essential. Robustness is not optional; it is a foundational requirement for embodied intelligence operating alongside humans.

2. Real-Time Computational Constraints and Control Stability

Real-time performance is a defining constraint in physical AI. Unlike cloud-based AI models where latency is tolerable, physical AI systems must operate within deterministic control cycles often measured in milliseconds.

Sensor acquisition, state estimation, neural inference, and low-level actuation must execute synchronously to preserve closed-loop stability.

Even minor computational delays or timing jitter can destabilise dynamic systems, particularly in high-speed manipulation, legged locomotion, or aerial robotics. As a result, physical AI robotics increasingly relies on edge computing, optimised neural architectures, and hardware-accelerated inference to meet strict temporal requirements.

3. Data Efficiency and the Sim-to-Real Transfer Problem

Training embodied physical AI systems directly in real environments is costly, slow, and sometimes unsafe. Although simulation environments accelerate development through large-scale data generation, transferring learned policies from simulation to hardware introduces discrepancies known as the sim-to-real gap.

Variations in friction coefficients, actuator compliance, sensor noise, and contact dynamics often cause performance degradation when models move from virtual to physical environments.

Addressing this challenge requires domain randomization, adaptive control frameworks, online learning mechanisms, and high-fidelity sensing to reduce environmental uncertainty in physical AI robotics deployments.

4. Physical Grounding and Nonlinear System Dynamics

A defining distinction of physical AI is its need for strong physical grounding. Unlike purely data-driven AI, physical AI systems must operate within hard physical constraints imposed by nonlinear dynamics, frictional interactions, torque limits, structural compliance, and contact mechanics.

Failure to incorporate these constraints can lead to instability, oscillatory behaviour, or unsafe force application. Advanced physical AI architectures therefore integrate model-based control, precise state estimation, and high-resolution force-torque feedback to ensure dynamically feasible and physically stable behaviour during execution.

Future of Physical AI

Physical AI is rapidly evolving in several directions:

- Integration with generative models

- AI-driven motion planning

- Self-learning robots

- Digital twin simulations

- Human-robot symbiosis

Emerging trends include:

- Foundation models for robotics

- Large behavior models (LBMs)

- Multi-modal sensor fusion

- AI-powered adaptive compliance

The long-term vision is clear:

Robots that do not just follow instructions, but understand and adapt physically.

The Role of Reliable Sensing and Precise Control in Physical AI

At the core of every high-performing physical AI system lies a foundation of reliable sensing and precise control. Intelligence alone is insufficient if perception is noisy or actuation is unstable. In real-world deployments—especially in physical AI robotics—millisecond-level errors in force estimation or motion control can lead to performance degradation, product damage, or safety risks.

High-resolution force-torque sensing enhances state estimation accuracy, enables compliant manipulation, and supports stable closed-loop control under dynamic contact conditions.

When integrated with advanced control strategies such as impedance or model-based control, precise sensing transforms AI-generated policies into predictable, repeatable physical behavior.

For organizations deploying intelligent automation at scale, sensing quality directly determines system robustness and long-term reliability. This is where specialized sensing technologies become mission-critical.

Bota Systems develops compact, high-bandwidth multi-axis force-torque sensors designed for precision robotics and contact-sensitive applications.

By delivering accurate 6-axis force measurement with low noise and real-time communication compatibility, such sensing solutions enable stable impedance control, compliant manipulation, and safe human–robot collaboration in advanced physical AI robotics systems.

Final Thoughts

Physical AI marks the shift from digital intelligence to embodied intelligence. While traditional AI processes data, physical AI manipulates reality.

From autonomous vehicles and surgical robots to adaptive manufacturing systems, physical AI robotics is transforming industries where precision, safety, and adaptability matter most. As sensing, control systems, and AI models continue to improve, physical AI will become foundational to next-generation automation.

The future of AI isn’t just software.

author